In preparation for The General Data Protection Regulation (GDPR) there must be an active UK decision about policy in the coming months for children and the Internet – provision of ‘Information Society Services’. The age of consent for online content aimed at children from May 25, 2018 will be 16 by default unless UK law is made to lower it.

Age verification for online information services in the GDPR, will mean capturing parent-child relationships. This could mean a parent’s email or credit card unless there are other choices made. What will that mean for access to services for children and to privacy? It is likely to offer companies an opportunity for a data grab, and mean privacy loss for the public, as more data about family relationships will be created and collected than the content provider would get otherwise.

Our interactions create a blended identity of online and offline attributes which I suggested in a previous post, create synthesised versions of our selves raises questions on data privacy and security.

The goal may be to protect the physical child. The outcome will mean it simultaneously expose children and parents to risks that we would not otherwise be put through increased personal data collection. By increasing the data collected, it increases the associated risks of loss, theft, and harm to identity integrity. How will legislation balance these risks and rights to participation?

The UK government has various work in progress before then, that could address these questions:

But will they?

As Sonia Livingstone wrote in the post on the LSE media blog about what to expect from the GDPR and its online challenges for children:

“Now the UK, along with other Member States, has until May 2018 to get its house in order”.

What will that order look like?

The Digital Strategy and Ed Tech

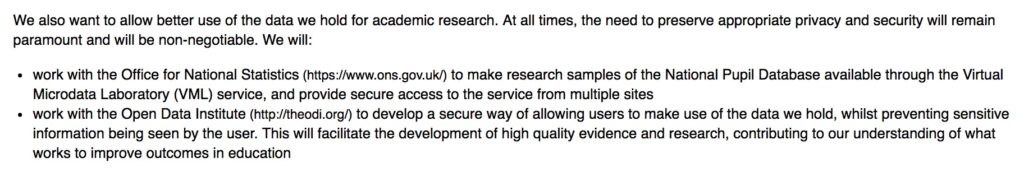

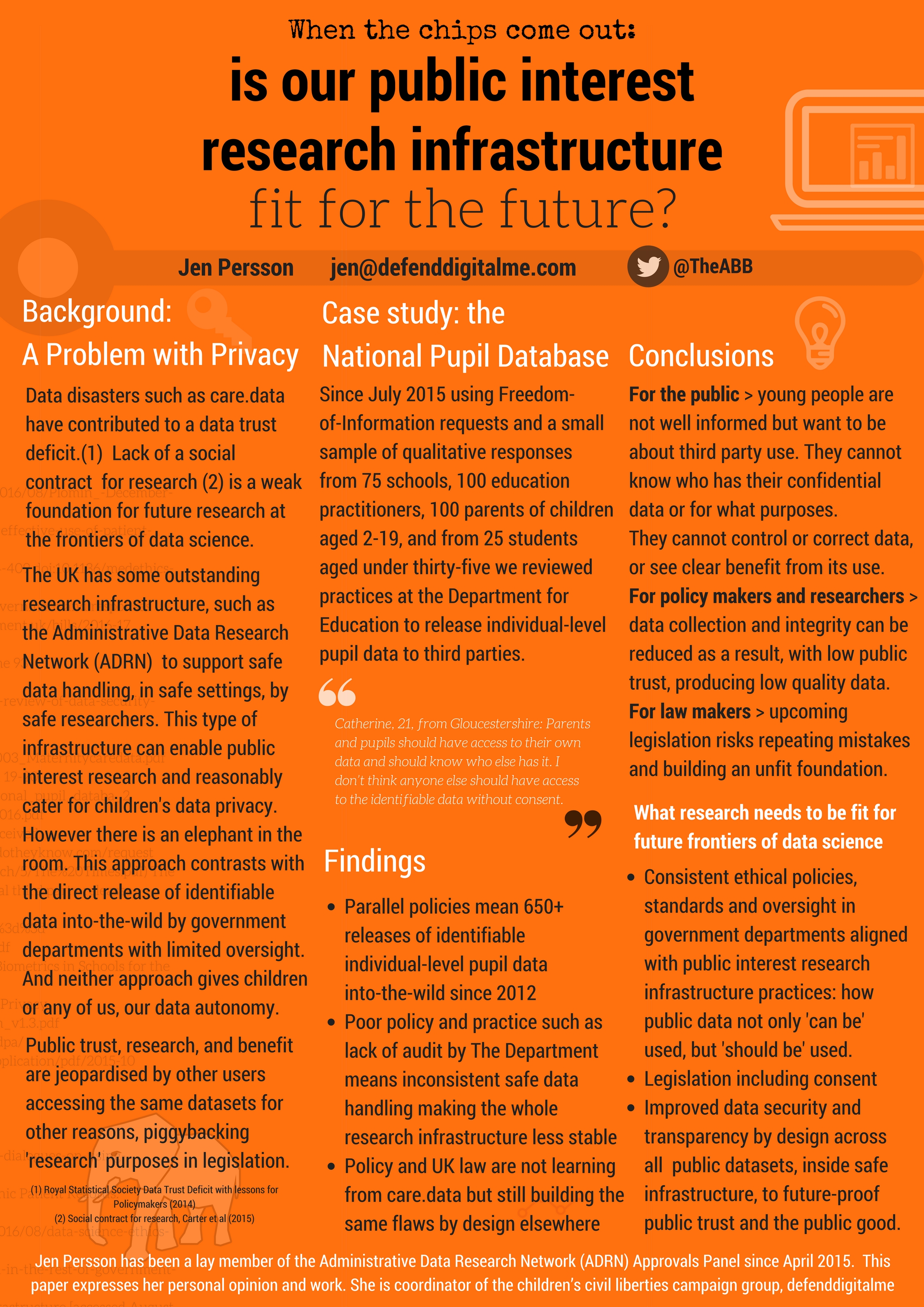

The Digital Strategy commits to changes in National Pupil Data management. That is, changes in the handling and secondary uses of data collected from pupils in the school census, like using it for national research and planning.

It also means giving data to commercial companies and the press. Companies such as private tutor pupil matching services, and data intermediaries. Journalists at the Times and the Telegraph.

Access to NPD via the ONS VML would mean safe data use, in safe settings, by safe (trained and accredited) users.

Sensitive data — it remains to be seen how DfE intends to interpret ‘sensitive’ and whether that is the DPA1998 term or lay term meaning ‘identifying’ as it should — will no longer be seen by users for secondary uses outside safe settings.

However, a grey area on privacy and security remains in the “Data Exchange” which will enable EdTech products to “talk to each other”.

The aim of changes in data access is to ensure that children’s data integrity and identity are secure. Let’s hope the intention that “at all times, the need to preserve appropriate privacy and security will remain paramount and will be non-negotiable” applies across all closed pupil data, and not only to that which may be made available via the VML.

This strategy is still far from clear or set in place.

The Digital Strategy and consumer data rights

The Digital Strategy commits under the heading of “Unlocking the power of data in the UK economy and improving public confidence in its use” to the implementation of the General Data Protection Regulation by May 2018. The Strategy frames this as a business issue, labelling data as “a global commodity” and as such, its handling is framed solely as a requirements needed to ensure “that our businesses can continue to compete and communicate effectively around the world” and that adoption “will ensure a shared and higher standard of protection for consumers and their data.”

The GDPR as far as children goes, is far more about protection of children as people. It focuses on returning control over children’s own identity and being able to revoke control by others, rather than consumer rights.

That said, there are data rights issues which are also consumer issues and product safety failures posing real risk of harm.

Neither The Digital Economy Bill nor the Digital Strategy address these rights and security issues, particularly when posed by the Internet of Things with any meaningful effect.

In fact, the chapter Internet of Things and Smart Infrastructure [ 9/19] singularly miss out anything on security and safety:

“We want the UK to remain an international leader in R&D and adoption of IoT. We are funding research and innovation through the three year, £30 million IoT UK Programme.”

There was much more thoughtful detail in the 2014 Blackett Review on the IoT to which I was signposted today after yesterday’s post.

If it’s not scary enough for the public to think that their sex secrets and devices are hackable, perhaps it will kill public trust in connected devices more when they find strangers talking to their children through a baby monitor or toy. [BEUC campaign report on #Toyfail]

“The internet-connected toys ‘My Friend Cayla’ and ‘i-Que’ fail miserably when it comes to safeguarding basic consumer rights, security, and privacy. Both toys are sold widely in the EU.”

Digital skills and training in the strategy doesn’t touch on any form of change management plans for existing working sectors in which we expect to see machine learning and AI change the job market. This is something the digital and industrial strategy must be addressing hand in glove.

The tactics and training providers listed sound super, but there does not appear to be an aspirational strategy hidden between the lines.

The Digital Economy Bill and citizens’ data rights

While the rest of Europe in this legislation has recognised that a future thinking digital world without boundaries, needs future thinking on data protection and empowered citizens with better control of identity, the UK government appears intent on taking ours away.

To take only one example for children, the Digital Economy Bill in Cabinet Office led meetings was explicit about use for identifying and tracking individuals labelled under “Troubled Families” and interventions with them. Why, when consent is required to work directly with people, that consent is being ignored to access their information is baffling and in conflict with both the spirit and letter of GDPR. Students and Applicants will see their personal data sent to the Student Loans Company without their consent or knowledge. This overrides the current consent model in place at UCAS.

It is baffling that the government is pursuing the Digital Economy Bill data copying clauses relentlessly, that remove confidentiality by default, and will release our identities in birth, marriage and death data for third party use without consent through Chapter 2, the opening of the Civil Registry, without any safeguards in the bill.

Government has not only excluded important aspects of Parliamentary scrutiny in the bill, it is trying to introduce “almost untrammeled powers” (paragraph 21), that will “very significantly broaden the scope for the sharing of information” and “specified persons” which applies “whether the service provider concerned is in the public sector or is a charity or a commercial organisation” and non-specific purposes for which the information may be disclosed or used. [Reference: Scrutiny committee comments]

Future changes need future joined up thinking

While it is important to learn from the past, I worry that the effort some social scientists put into looking backwards, is not matched by enthusiasm to look ahead and making active recommendations for a better future.

Society appears to have its eyes wide shut to the risks of coercive control and nudge as research among academics and government departments moves in the direction of predictive data analysis.

Uses of administrative big data and publicly available social media data for example, in research and statistics, needs further new regulation in practice and policy but instead the Digital Economy Bill looks only at how more data can be got out of Department silos.

A certain intransigence about data sharing with researchers from government departments is understandable. What’s the incentive for DWP to release data showing its policy may kill people?

Westminster may fear it has more to lose from data releases and don’t seek out the political capital to be had from good news.

The ethics of data science are applied patchily at best in government, and inconsistently in academic expectations.

Some researchers have identified this but there seems little will to action:

“It will no longer be possible to assume that secondary data use is ethically unproblematic.”

[Data Horizons: New forms of Data for Social Research, Elliot, M., Purdam, K., Mackey, E., School of Social Sciences, The University Of Manchester, 2013.]

Research and legislation alike seem hell bent on the low hanging fruit but miss out the really hard things. What meaningful benefit will it bring by spending millions of pounds on exploiting these personal data and opening our identities to risk just to find out whether X course means people are employed in Y tax bracket 5 years later, versus course Z where everyone ends up self employed artists? What ethics will be applied to the outcomes of those questions asked and why?

And while government is busy joining up children’s education data throughout their lifetimes from age 2 across school, FE, HE, into their HMRC and DWP interactions, there is no public plan in the Digital Strategy for the coming 10 to 20 years employment market, when many believe, as do these authors in American Scientific, “around half of today’s jobs will be threatened by algorithms. 40% of today’s top 500 companies will have vanished in a decade.”

What benefit will it have to know what was, or for the plans around workforce and digital skills list ad hoc tactics, but no strategy?

We must safeguard jobs and societal needs, but just teaching people to code is not a solution to a fundamental gap in what our purpose will be, and the place of people as a world-leading tech nation after Brexit. We are going to have fewer talented people from across the world staying on after completing academic studies, because they’re not coming at all.

There may be investment in A.I. but where is the investment in good data practices around automation and machine learning in the Digital Economy Bill?

To do this Digital Strategy well, we need joined up thinking.

Improving online safety for children in The Green Paper on Children’s Internet Safety should mean one thing:

Children should be able to use online services without being used and abused by them.

This article arrived on my Twitter timeline via a number of people. Doteveryone CEO Rachel Coldicutt summed up various strands of thought I started to hear hints of last month at #CPDP2017 in Brussels:

“As designers and engineers, we’ve contributed to a post-thought world. In 2017, it’s time to start making people think again.

“We need to find new ways of putting friction and thoughtfulness back into the products we make.” [Glanceable truthiness, 30.1.2017]

Let’s keep the human in discussions about technology, and people first in our products

All too often in technology and even privacy discussions, people have become ‘consumers’ and ‘customers’ instead of people.

The Digital Strategy may seek to unlock “the power of data in the UK economy” but policy and legislation must put equal if not more emphasis on “improving public confidence in its use” if that long term opportunity is to be achieved.

And in technology discussions about AI and algorithms we hear very little about people at all. Discussions I hear seem siloed instead into three camps: the academics, the designers and developers, the politicians and policy makers. And then comes the lowest circle, ‘the public’ and ‘society’.

It is therefore unsurprising that human rights have fallen down the ranking of importance in some areas of technology development.

It’s time to get this house in order.