Smart cities: private reach in public space and personal lives

Smart-cities are growing in the UK through private investment and encroachment on public space. They are being built by design at home, and supported by UK money abroad, with enormous expansion plans in India for example, in almost 100 cities.

With this rapid expansion of “smart” technology not only within our living rooms but my living space and indeed across all areas of life, how do we ensure equitable service delivery, (what citizens generally want, as demonstrated by strength of feeling on the NHS) continues in public ownership, when the boundary in current policy is ever more blurred between public and private corporate ownership?

How can we know and plan by-design that the values we hope for, are good values, and that they will be embedded in systems, in policies and planning? Values that most people really care about. How do we ensure “smart” does not ultimately mean less good? That “smart” does not in the end mean, less human.

Economic benefits seem to be the key driver in current government thinking around technology – more efficient = costs less.

While using technology progressing towards replacing repetitive work may be positive, how will we accommodate for those whose skills will no longer be needed? In particular its gendered aspect, and the more vulnerable in the workforce, since it is women and other minorities who work disproportionately in our part-time, low skill jobs. Jobs that are mainly held by women, even what we think of as intrinsically human, such as carers, are being trialed for outsourcing or assistance by technology. These robots monitor people, in their own homes and reduce staffing levels and care home occupancy. We’ll no doubt hear how good it is we need fewer carers because after all, we have a shortage of care staff. We’ll find out whether it is positive for the cared, or whether they find it it less ‘human'[e]. How will we measure those costs?

The ideal future of us all therefore having more leisure time sounds fab, but if we can’t afford it, we won’t be spending more of our time employed in leisure. Some think we’ll simply be unemployed. And more people live in the slums of Calcutta than in Soho.

One of the greatest benefits of technology is how more connected the world can be, but will it also be more equitable?

There are benefits in remote sensors monitoring changes in the atmosphere that dictate when cars should be taken off the roads on smog-days, or indicators when asthma risk-factors are high.

Crowd sourcing information about things which are broken, like fix-my-street, or lifts out-of-order are invaluable in cities for wheelchair users.

Innovative thinking and building things through technology can create things which solve simple problems and add value to the person using the tool.

But what of the people that cannot afford data, cannot be included in the skilled workforce, or will not navigate apps on a phone?

How this dis-incentivises the person using the technology has not only an effect on their disappointment with the tool, but the service delivery, and potentially wider still even to societal exclusion or stigma.These were the findings of the e-red book in Glasgow explained at the Digital event in health, held at the King’s Fund in summer 2015.

Further along the scale of systems and potential for negative user experience, how do we expect citizens to react to finding punishments handed out by unseen monitoring systems, finding out our behaviour was ‘nudged’ or find decisions taken about us, without us?

And what is the oversight and system of redress for people using systems, or whose data are used but inaccurate in a system, and cause injustice?

And wider still, while we encourage big money spent on big data in our part of the world how is it contributing to solving problems for millions for whom they will never matter? Digital and social media makes increasingly transparent our one connected world, with even less excuse for closing our eyes.

Approximately 15 million girls worldwide are married each year – that’s one girl, aged under 18, married off against her will every two seconds. [Huff Post, 2015]

Tinder-type apps are luxury optional extras for many in the world.

Without embedding values and oversight into some of what we do through digital tools implemented by private corporations for profit, ‘smart’ could mean less fair, less inclusive, less kind. Less global.

If digital becomes a destination, and how much it is implemented is seen as a measure of success, by measuring how “smart” we become risks losing sight of seeing technology as solutions and steps towards solving real problems for real people.

We need to be both clever and sensible, in our ‘smart’.

Are public oversight and regulation built in to make ‘smart’ also be safe?

If there were public consultation on how “smart” society will look would we all agree if and how we want it?

Thinking globally, we need to ask if we are prioritising the wrong problems? Are we creating more tech that we already have invented solutions for place where governments are willing to spend on them? And will it in those places make the society more connected across class and improve it for all, or enhance the lives of the ‘haves’ by having more, and the ‘have-nots’ be excluded?

Does it matter how smart your TV gets, or carer, or car, if you cannot afford any of these convenient add-ons to Life v1.1?

As we are ever more connected, we are a global society, and being ‘smart’ in one area may be reckless if at the expense or ignorance of another.

People need to Understand what “Smart” means

“Consistent with the wider global discourse on ‘smart’ cities, in India urban problems are constructed in specific ways to facilitate the adoption of “smart hi-tech solutions”. ‘Smart’ is thus likely to mean technocratic and centralized, undergirded by alliances between the Indian government and hi-technology corporations.” [Saurabh Arora, Senior Lecturer in Technology and Innovation for Development at SPRU]

Those investing in both countries are often the same large corporations. Very often, venture capitalists.

Systems designed and owned by private companies provide the information technology infrastructure that i:

‘the basis for providing essential services to residents. There are many technological platforms involved, including but not limited to automated sensor networks and data centres.’

What happens when the commercial and public interest conflict and who decides that they do?

Decision making, Mining and Value

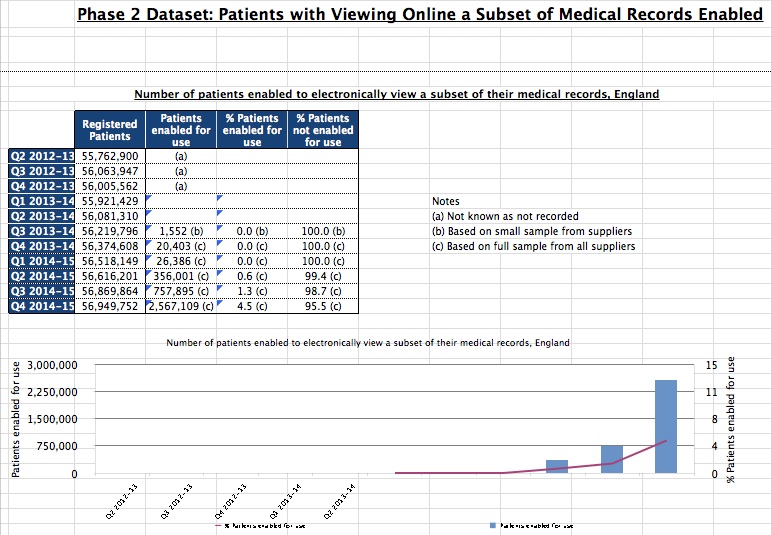

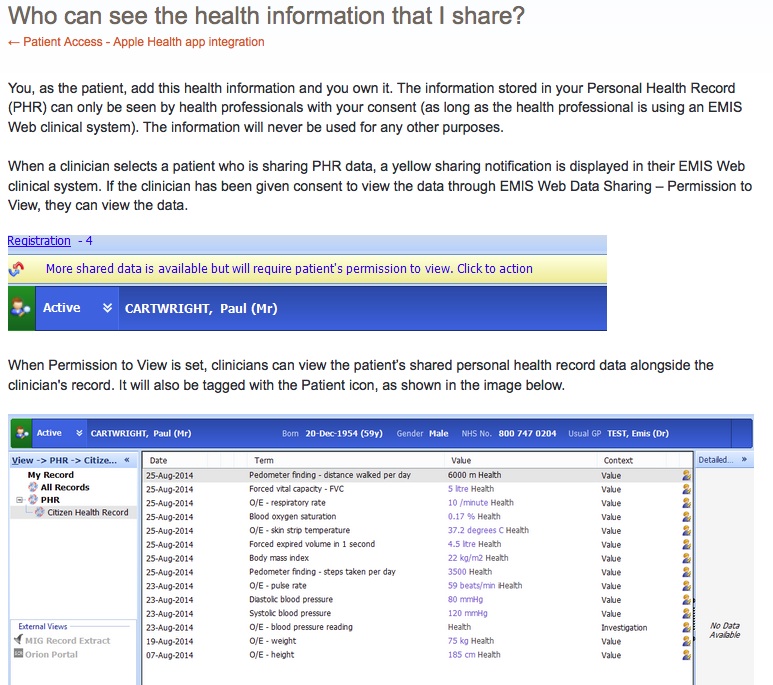

Massive amounts of data generated are being mined for making predictions, decisions and influencing public policy: in effect using Big Data for research purposes.

Using population-wide datasets for social and economic research today, is done in safe settings, using deidentified data, in the public interest, and has independent analysis of the risks and benefits of projects as part of the data access process.

Each project goes before an ethics committee review to assess its considerations for privacy and not only if the project can be done, but should be done, before it comes for central review.

Similarly our smart-cities need ethics committee review assessing the privacy impact and potential of projects before commissioning or approving smart-technology. Not only assessing if they are they feasible, and that we ‘can’ do it, but ‘should’ we do it. Not only assessing the use of the data generated from the projects, but assessing the ethical and privacy implications of the technology implementation itself.

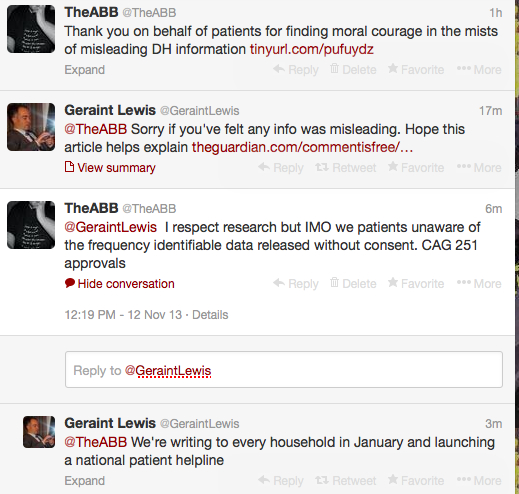

The Committee recommendations on Big Data recently proposed that a ‘Council of Data Ethics’ should be created to explicitly address these consent and trust issues head on. But how?

Unseen smart-technology continues to grow unchecked often taking root in the cracks between public-private partnerships.

We keep hearing about Big Data improving public services but that “public” data is often held by private companies. In fact our personal data for public administration has been widely outsourced to private companies of which we have little oversight.

We’re told we paid the price in terms of skills and are catching up.

But if we simply roll forward in first gear into the connected city that sees all, we may find we arrive at a destination that was neither designed nor desired by the majority.

We may find that the “revolution, not evolution”, hoped for in digital services will be of the unwanted kind if companies keep pushing more and more for more data without the individual’s consent and our collective public buy-in to decisions made about data use.

Having written all this, I’ve now read the Royal Statistical Society’s publication which eloquently summarises their recent work and thinking. But I wonder how we tie all this into practical application?

How we do governance and regulation is tied tightly into the practicality of public-private relationships but also into deciding what should society look like? That is what our collective and policy decisions about what smart-cities should be and may do, is ultimately defining.

I don’t think we are addressing in depth yet the complexity of regulation and governance that will be sufficient to make Big Data and Public Spaces safe because companies say too much regulation risks choking off innovation and creativity.

But that risk must not be realised if it is managed well.

Rather we must see action to manage the application of smart-technology in a thoughtful way quickly, because if we do not, very soon, we’ll have lost any say in how our service providers deliver.

*******

I began my thoughts about this in Part one, on smart technology and data from the Sprint16 session and after this (Part two), continue to look at the design and development of smart technology making “The Best Use of Data” with a UK company case study (Part three) and “The Best Use of Data” used in predictions and the Future (Part four).