When I drop my children at school in the morning I usually tell them three things: “Be kind. Have fun. Make good choices.”

I’ve been thinking recently about what a positive and sustainable future for them might look like. What will England be in 10 years?

The #Sprint16 snippets I read talk about how: ”Digital is changing how we deliver every part of government,” and “harnessing the best of digital and technology, and the best use of data to improve public services right across the board.”

From that three things jumped out at me:

- The first is that the “best use of data” in government’s opinion may conflict with that of the citizen.

- The second, is how to define “public services” right across the board in a world in which boundaries between private and public in the provision of services have become increasingly blurred.

- And the third is the power of tech to offer both opportunity and risk if used in “every part of government” and effects on access to, involvement in, and the long-term future of, democracy.

What’s the story so far?

In my experience so far of trying to be a digital citizen “across the board” I’ve seen a few systems come and go. I still have my little floppy paper Government Gateway card, navy blue with yellow and white stripes. I suspect it is obsolete. I was a registered Healthspace user, and used it twice. It too, obsolete. I tested my GP online service. It was a mixed experience.

These user experiences are shaping how I interact with new platforms and my expectations of organisations, and I will be interested to see what the next iteration, nhs alpha, offers.

How platforms and organisations interact with me, and my data, is however increasingly assumed without consent. This involves new data collection, not only using data from administrative or commercial settings to which I have agreed, but new scooping of personal data all around us in “smart city” applications.

Just having these digital applications will be of no benefit and all the disadvantages of surveillance for its own sake will be realised.

So how do we know that all these data collected are used – and by whom? How do we ensure that all the tracking actually gets turned into knowledge about pedestrian and traffic workflow to make streets and roads safer and smoother in their operation, to make street lighting more efficient, or the environment better to breathe in and enjoy? And that we don’t just gift private providers tonnes of valuable data which they simply pass on to others for profit?

Because without making things better, in this Internet-of-Things will be a one-way ticket to power in the hands of providers and loss of control, and quality of life. We’ll work around it, but buying a separate SIM card for trips into London, avoiding certain parks or bridges, managing our FitBits to the nth degree under a pseudonym. But being left no choice but to opt out of places or the latest technology to enjoy, is also tedious. If we want to buy a smart TV to access films on demand, but don’t want it to pass surveillance or tracking information back to the company how can we find out with ease which products offer that choice?

Companies have taken private information that is none of their business, and quite literally, made it their business.

The consumer technology hijack of “smart” to always mean marketing surveillance creates a divide between those who will comply for convenience and pay the price in their privacy, and those who prize privacy highly enough to take steps that are less convenient, but less compromised.

But even wanting the latter, it can be so hard to find out how to do, that people feel powerless and give-in to the easy option on offer.

Today’s system of governance and oversight that manages how our personal data are processed by providers of public and private services we have today, in both public and private space, is insufficient to meet the values most people reasonably expect, to be able to live their life without interference.

We’re busy playing catch up with managing processing and use, when many people would like to be able to control collection.

The Best use of Data: Today

My experience of how the government wants to ‘best use data’ is that until 2013 I assumed the State was responsible with it.

I feel bitterly let down.

care.data taught me that the State thinks my personal data and privacy are something to exploit, and “the best use of my data” for them, may be quite at odds with what individuals expect. My trust in the use of my health data by government has been low ever since. Saying one thing and doing another, isn’t making it more trustworthy.

I found out in 2014 how my children’s personal data are commercially exploited and given to third parties including press outside safe settings, by the Department for Education. Now my trust is at rock bottom. I tried to take a look at what the National Pupil Database stores on my own children and was refused a subject access request, meanwhile the commercial sector and Fleet Street press are given out not only identifiable data, but ‘highly sensitive’ data. This just seems plain wrong in terms of security, transparency and respect for the person.

The attitude that there is an entitlement of the State to individuals’ personal data has to go.

The State has pinched 20 m children’s privacy without asking. Tut Tut indeed. [see Very British Problems for a translation].

And while I support the use of public administrative data in deidentified form in safe settings, it’s not to be expected that anything goes. But the feeling of entitlement to access our personal data for purposes other than that for which we consented, is growing, as it stretches to commercial sector data. However suggesting that public feeling measured based on work with 0.0001% of the population, is “wide public support for the use and re-use of private sector data for social research” seems tenuous.

Even so, comments even in that tiny population suggested, “many participants were taken by surprise at the extent and size of data collection by the private sector” and some “felt that such data capture was frequently unwarranted.” “The principal concerns about the private sector stem from the sheer volume of data collected with and without consent from individuals and the profits being made from linking data and selling data sets.”

The Best use of Data: The Future

Young people, despite seniors often saying “they don’t care about privacy” are leaving social media in search of greater privacy.

These things cannot be ignored if the call for digital transformation between the State and the citizen is genuine because try and do it to us and it will fail. Change must be done with us. And ethically.

And not “ethics” as in ‘how to’, but ethics of “should we.” Qualified transparent evaluation as done in other research areas, not an add on, but integral to every project, to look at issues such as:

- whether participation is voluntary, opt-out or covert

- how participants can get and give informed consent

- accessibility to information about the collection and its use

- small numbers, particularly of vulnerable people included

- identifiable data collection or disclosure

- arrangements for dealing with disclosures of harm and recourse

- and how the population that will bear the risks of participating in the research is likely to benefit from the knowledge derived from the research or not.

Ethics is not about getting away with using personal data in ways that won’t get caught or hauled over the coals by civil society.

It’s balancing risk and benefit in the public interest, and not always favouring the majority, but doing what is right and fair.

We hear a lot at the moment on how the government may see lives, shaped by digital skills, but too little of heir vison for what living will look and feel like, in smart cities of the future.

My starting question is, how does government hope society will live there and is it up to them to design it? If not, who is because these smart-city systems are not designing themselves. You’ve heard of Stepford wives. I wonder what do we do if we do not want to live like Milton Keynes man?

I hope that the world my children will inherit will be more just, more inclusive, and with a more sustainable climate to support food, livelihoods and kinder than it is today. Will ‘smart’ help or hinder?

What is rarely discussed in technology discussions is how the service should look regardless of technology. The technology assumed as inevitable, becomes the centre of service delivery.

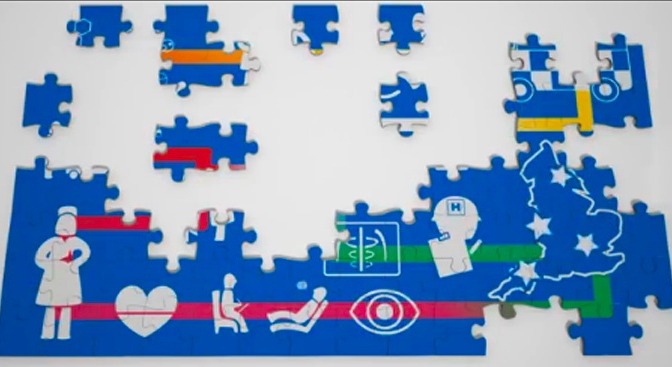

I’d like to first understand what is the central and local government vision for “public services” provision for people of the future? What does it mean for everyday services like schools and health, and how does it balance security and our freedoms?

Because without thinking about how and who provides those services for people, there is a hole in the discussion of “the best use of data” and their improvement “right across the board”.

The UK government has big plans for big data sharing, sharing across all public bodies, some tailored for individual interventions.

While there are interesting opportunities for public benefit from at-scale systems, the public benefit is at risk not only from lack of trust in how systems gather data and use them, but that interoperability in service, and the freedom for citizens to transfer provider, gets lost in market competition.

Openness and transparency can be absent in public-private partnerships until things go wrong. Given the scale of smart-cities, we must have more than hope that data management and security will not be one of those things.

How will we know if new plans are designed well, or not?

When I look at my children’s future and how our current government digital decision making may affect it, I wonder if their future will be more or less kind. More or less fun.

Will they be left with the autonomy to make good choices of their own?

The hassle we feel when we feel watched all the time, by every thing that we own, in every place we go, having to check every check box has a reasonable privacy setting, has a cumulative cost in our time and anxieties.

Smart technology has invaded not only our public space and our private space, but has nudged into our head space.

I for one have had enough already. For my kids I want better. Technology should mean progress for people, not tyranny.

Living in smart cities, connected in the Internet-of-Things, run on their collective Big Data and paid for by commercial corporate providers, threatens not only their private lives and well-being, their individual and independent lives, but ultimately independent and democratic government as we know it.

*****

This is the start of a four part set of thoughts: Beginnings with smart technology and data triggered by the Sprint16 session (part one). I think about this more in depth in “Smart systems and Public Services” (Part two) here, and the design and development of smart technology making “The Best Use of Data” looking at today in a UK company case study (Part three) before thoughts on “The Best Use of Data” used in predictions and the Future (Part four).