This week the Minister for Life Sciences George Freeman MP caused some furore in the Mirror and wider media, for having said, “the politics of envy” in Parliament.

The paper reported that the Labour frontbencher Stella Creasy said she was shocked:

“Following the law isn’t the politics of envy, it’s the politics of justice.”

It was in a debate on the minimum wage, in response to questions from other MPs why so few firms had been prosecuted since 2010, for not paying the legal minimum wage requirements.

Nine firms had been charged for non-compliance since 2010:

He said: “Prosecutions may satisfy the politics of envy of the Opposition, but they are not the best mechanism to drive compliance.”

What a contrast with Mr Freeman’s remarks I saw first hand in prosecutions at the Magistrate’s Courts last week.

I saw a 32 year old man prosecuted and told to pay £178 in fines and costs, for stealing a £13.99 bottle of vodka from Aldi.

A young builder who would have the same, £178 in fines and costs, deducted weekly from his benefits, prosecuted for a 3am drunken lunge which the defendant can’t remember, and missed its mark.

A 15 year-old who without lawyer, parents or having read the paperwork on his charges, pleaded guilty in an adult court to stealing a bicycle wheel and then had to wait around on the off chance a juvenille trained magistrate could hear the whole thing again, to sentence him.

A homeless man pleaded guilty to handling a set of stolen hair straighteners. He needed healthcare, not prosecution.

EDF was in getting court orders for forced entry to homes which would be cut off for non-payment of energy bills.

If “prosecutions are not the best mechanism to drive compliance” for big firms who exploit their staff, why is prosecution the mechanism we use every single day to punish the weakest in society?

It was a sad procession of petty crimes driven, not by envy, but by desperation – homelessness, unemployment and alcoholism.

Some defendants were grumpy, most bashful, and quite clearly, none were happy. There was not one of them who showed any hope.

The teenager looked fed up with the system, and looking him in the eye, I saw someone the system has clearly already let down.

In society which is so imbalanced, and with MPs earning well, some having second jobs, you cannot blame some people for feeling that MPs don’t deserve our trust. Or that some appear to have little empathy for those who have rarely have a positive bank balance.

People sanctioned for reasons few understand, prosecuted when life gets out of control. Neither helps the person who is punished.

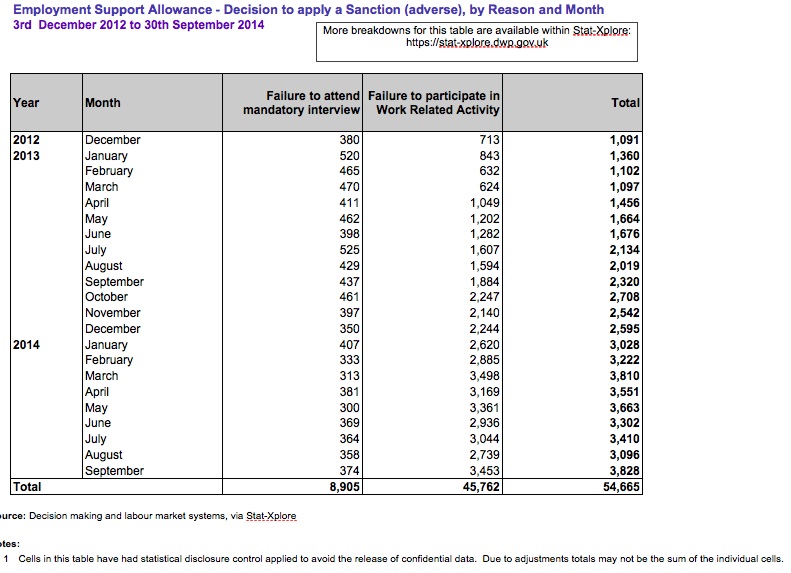

What jobs are these people being offered – or are we asking those who cannot work to do so – when the number of those sanctioned for not ‘participating in work related activity’ has steadily increased?

Wouldn’t it be nice if we could find a smart solution to prosecutions, when I agree with George, “they are clearly not the best mechanism to drive compliance”? albeit, in a different context.

Can we stop punishing the poor by making them poorer?

While I am sure it’s a worthy small business to champion, Mr Freeman’s twitter feed says he was popping in to buy a jumper at the end of February – the only one shown on the shop website is the Merino and Alpaca Roll Neck priced at £189.00.

I’m not making a personal criticism or envious of being able to buy a luxury sweater without apparent much need to budget for it. Mr Freeman’s business background and investments speak for themselves.

But it does illustrate the enormous gulf between the everyday of some elected representatives and electorate. His words underpin it.

The use of these soundbites by MPs, is common across the board, but it is harmful to debate and stops many issues being properly discussed. It avoids further discussion, by changing the subject.

It’s not the first time we’ve seen this turn of phrase. Looking back to last summer, Owen Jones wrote about it in the Guardian.

I find I have mixed reactions to Jones’ views, but on the politics of envy, he summed up rather well:

“The left, goes this narrative, is really driven by envy and spite towards those of pampered backgrounds.

“The “politics of envy” accusation attempts to shut down even the mildest attempts at social justice. It materialises when Labour suggests a 50% top rate of tax for all earnings above £150,000. The right screams “politics of envy” at a mansion tax – while championing the bedroom tax, which falls on the shoulders of disabled people and the poor.”

The convenient soundbite turned a debate on fair wages into yet another political counter, the defensive move became an attack.

But it’s an attack on the wrong things if we want a society which works, in all senses of the word.

Envy has nothing to do with social justice and fairness, and in this case, as Stella Creasy pointed out, was about following the law.

The application of the law designed to protect workers from exploitation and to make sure it’s financially worth working at all.

It’s a safeguard which isn’t even aiming for best practices, but protecting the majority of workers from the worst.

It should be part of wider employment measures which also protect these kinds of extreme exploitation becoming more widespread.

Let’s face it, the minimum wage rates, aren’t decent living wages.

As we approach the General Election, I hope candidates will look in the mirror and ask themselves, why do you want to stand?

Who do you represent, serve and what kind of society do you want to live in? What society will your own and my children inherit?

The ‘politics of envy’ talk, only poisons the real subjects to debate by turning them into party political soundbites, when what we need are real solutions to real social issues.

Wouldn’t it be nice if this election campaign could address them with substance?

What would fair wages pay and how could we achieve them?

What would a truly just Justice System look like?

Now that, would be a leaders’ debate worth having.